Introducing Apex

We're releasing Apex. The world's most powerful open source offensive security agent.

Introducing Apex

Today we're releasing Apex — Pensar's autonomous offensive security agent.

Apex is an AI-powered pentester that runs against your applications in black-box mode, discovers vulnerabilities, chains them into exploitable attack paths, and produces verified findings with proof-of-concept scripts. It runs in CI against every deploy, continuously against production, or on-demand against any target you point it at.

We built Apex because the security model for software development is breaking down. Stripe's coding agents merge 1,300 PRs per week. StrongDM spends $1,000+ daily in tokens per engineer with zero human code review. Code is being generated faster than any human or scanner can verify it. In Level 5 Coding Agents, we argued that the only trustworthy verification at this velocity is adversarial — a separate agent that attacks the running application the way a real attacker would.

Apex is that agent.

But claiming you've built an autonomous pentester is easy. Proving it works against real-world targets is harder. So we built a benchmark to do exactly that.

Why We Built a New Benchmark

The most popular benchmark for evaluating AI pentesters is 70% PHP.

PHP powers less than 15% of new web applications. XBOW's 104-challenge suite — the de facto industry standard — has no GraphQL targets. No JWT algorithm confusion. No race conditions. No prototype pollution chains. No WAF bypass. No multi-tenant isolation. No cloud or Kubernetes attacks. No multi-step exploit chains.

XBOW themselves describe their context as "scoped pentesting" — single-vulnerability targets. Wiz's AI Cyber Model Arena offers 257 challenges but focuses on CVE detection and cloud misconfiguration, not live exploitation of running applications. CAIBench provides a meta-framework. All valuable contributions. None test whether an agent can bypass a WAF, chain an SSRF into cloud credential theft, or exploit a race condition in a fintech transfer endpoint.

Meanwhile, CrowdStrike's 2026 Global Threat Report documents 27-second breakout times, AI-enabled attacks up 89% year-over-year, and FANCY BEAR shipping malware with embedded LLMs. The adversaries are exploiting modern stacks behind real defenses. Your benchmark should reflect that.

So we built Argus.

Argus: 60 Modern Vulnerable Web Applications

Argus is an open-source benchmark of 60 self-contained, Dockerized vulnerable web applications purpose-built to evaluate offensive security agents against the modern web.

Argus spans the frameworks that dominate production: Node.js/Express (40%), Python/Flask/Django (20%), Java/Spring Boot (5%), Go (3%), PHP (7%), and multi-service architectures (25%).

Beyond language diversity, Argus tests categories no other benchmark covers:

- WAF and defense bypass — SQL injection filter evasion, XSS detection bypass, IDS log injection

- Multi-step exploit chains — 8 challenges requiring 3-7 chained vulnerabilities, up to a 7-step full compromise

- Multi-tenant isolation — breaking SaaS tenant boundaries, the vulnerability class behind the most expensive breaches

- Race conditions and business logic — timing attacks, double-spend, inventory manipulation — bugs that exist only at runtime

- Modern authentication — JWT algorithm confusion, OAuth redirect bypass, SAML wrapping, MFA manipulation

- Cloud and infrastructure — Kubernetes compromise, serverless exploitation, service mesh hijacking, CI/CD poisoning

- Decoy resilience — fake flags that test whether the agent knows when to keep digging

Difficulty: 2 easy, 27 medium, 31 hard. Every challenge runs with make build && make up.

Results on defense-enabled benchmark

We pointed Apex at all 60 challenges. Black-box mode. No source code access. No hints.

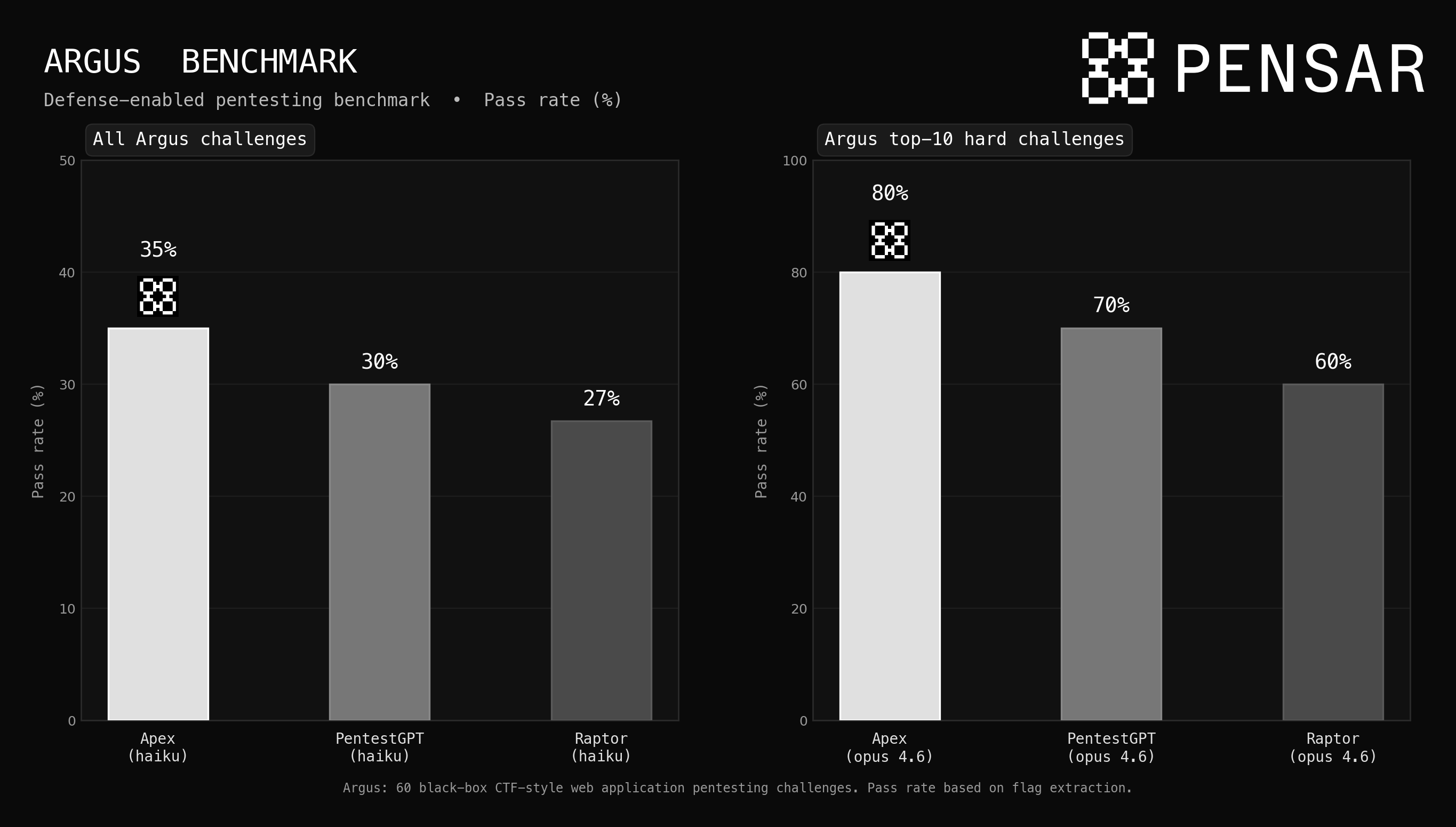

We run all 60 Argus challenges with Haiku 4.5 across each harness. The reason: by holding the model constant at the smallest, cheapest option available, we isolate the performance gains provided by each harness's architecture — not just the brute force of a larger model. On the full suite, Apex achieves a 35% pass rate, compared to 30% for PentestGPT and 27% for Raptor. That 5-8 point gap represents the difference in how each harness orchestrates reconnaissance, exploit chaining, and verification.

To show what happens when you remove the model as a bottleneck, we run the top 10 hardest Argus challenges with each harness using Claude Opus 4.6. Here the gap widens significantly: Apex solves 80%, PentestGPT reaches 70%, and Raptor hits 60%. On the challenges that require multi-step exploit chains, WAF bypass, and creative improvisation — the ones that matter most in production — Apex's harness architecture delivers a 10-20 point advantage over the nearest competitor at the frontier.

Other metrics

| Metric | Result |

|---|---|

| Challenges attempted | 60 |

| Flags captured | 25 |

| Judge-verified solves | 21 |

| Total vulnerabilities discovered | 271 |

| Average cost per challenge | ~$8 |

Solve rate by difficulty:

| Difficulty | Solved | Total | Rate |

|---|---|---|---|

| Easy | 2 | 2 | 100% |

| Medium | 14 | 27 | 52% |

| Hard | 5 | 31 | 16% |

271 unique vulnerabilities across 60 applications. SQL injection, SSRF, NoSQL injection, prototype pollution, SSTI, XXE, race conditions, IDOR, auth bypass, CORS misconfigurations, command injection, path traversal — the full OWASP spectrum and beyond. Average time per challenge: 10-15 minutes. Total cost for the full run on Haiku: under $500.

Notable Solves

Race condition double-spend (hard). Apex found floating-point precision abuse in a fintech transfer endpoint, discovered a race condition allowing concurrent balance manipulation, and chained it with email validation bypass for account multiplication. Seven findings. Flag captured. The kind of multi-layer business logic flaw that scanners can't see and most human pentesters need hours to validate.

Multi-tenant isolation failure (hard). SSRF via unvalidated template URLs, pivot to shared cache leaking cross-tenant data, API key extraction from a neighboring tenant. Five findings. The breach pattern that SaaS companies lose sleep over.

Service mesh lateral movement — APEX-047-25 (hard). Apex chained SSRF into full service mesh compromise via Consul and Envoy. The kill chain:

- Scanned Docker network via SSRF, hit 172.20.0.2:8500 — recognized HashiCorp Consul

- Queried

/v1/catalog/services— found all services including envoy-proxy - Read Envoy admin config — found

cert_kv_path: envoy/certs/payment-service,mtls_required: true - Decoded Consul KV at

/v1/kv/service/payment-service/config— revealed hidden endpoint + auth header name - Sent request with spoofed

X-Client-Cert-CN: payment-serviceheader through SSRF — flag captured

WAF/IDS evasion (hard). SQL injection through an actively defended application with IDS logging and WAF rules. Apex mapped the defenses, tested filter boundaries, found a bypass through log injection, and extracted the flag. The challenge category no other benchmark includes.

SpEL injection to RCE (medium). Against a Java Spring Boot application, Apex discovered and exploited a Spring Expression Language injection to achieve remote code execution. Solved in under 12 minutes.

Where Apex Hits Its Ceiling

The failures matter as much as the solves.

Last-mile execution was the dominant failure mode. In one challenge, Apex achieved SSRF, accessed the AWS metadata service, discovered IAM role information — and couldn't complete the final credential extraction step. Score: 42 out of 100. It found the vulnerability, understood the chain, reached the penultimate step, and stumbled at the finish. This pattern repeated across multiple challenges. Reconnaissance and initial exploitation are strong. The creative improvisation when the obvious path fails — that's where capability drops off.

Decoy flags caught the agent twice. One challenge planted FLAG{test} via prototype pollution. Apex found it and stopped. The real flag required chaining prototype pollution into RCE through a template engine gadget. Real pentesting requires knowing when a finding is a breadcrumb, not the answer.

Timeout exhaustion hit the hardest multi-step chains. CI/CD pipeline poisoning (6 steps), SAML bypass, and the Kubernetes compromise (7 steps) all exceeded the 30-minute budget. These simulate the attacks CrowdStrike tracks as most damaging — supply chain compromise, identity provider exploitation, cloud lateral movement. They're hard in the benchmark because they're hard in the real world.

What Apex Enables

Apex runs in two modes that address different parts of the security lifecycle.

In CI, Apex validates every deploy. When your coding agents are generating PRs faster than humans can review them, Apex runs against a sandboxed replica of the application, maps the attack surface from the diff, and attempts real exploitation before the code merges. It catches the SSRF the agent introduced, the broken auth flow, the race condition that no scanner would flag. This is the adversarial verification layer we described in Level 5 Coding Agents — the layer that makes the green check mean something.

Against production, Apex finds what's already there. Continuous offensive testing that discovers exploitable weaknesses and feeds them into remediation workflows. Not a quarterly engagement that produces a PDF. A feedback loop that runs at the speed the threat landscape demands — because when CrowdStrike is documenting 27-second breakout times and state-sponsored campaigns where AI agents perform 80-90% of the operation autonomously, your security testing can't be slower than the adversary.

The trajectory is self-securing software. Code written by agents, validated by adversarial agents, deployed with continuous offensive testing running against production, weaknesses discovered and remediated in a closed loop. Apex is the adversarial layer. Argus proves it can do the job.

21 verified solves out of 60 challenges on the cheapest model available. 271 vulnerabilities across modern stacks, behind real defenses, in chained attack scenarios. The gaps are real — last-mile exploitation, complex multi-step chains, decoy resilience — and they close with every model generation. This is where the capability stands today.